pharmapsychotic on Twitter: "#stablediffusion2 uses the OpenCLIP ViT-H model trained on the LAION dataset so it knows different things than the OpenAI ViT-L we're all used to prompting. To help out with

Image-text similarity score distributions using CLIP ViT-B/32 (left)... | Download Scientific Diagram

2 supports plastique Clip'vit+ à clipser pour tringle de vitrage "3 en 1" blanc - MOBOIS - Mr.Bricolage

We apply the same set of hyperparameters to fine-tune both ResNet CLIP... | Download Scientific Diagram

Amazon.com: Chip Clips, Chip Clips Bag Clips Food Clips, Bag Clips for Food, Chip Bag Clip, Food Clips, PVC-Coated Clips for Food Packages, Paper Clips, Clothes Pin(Mixed Colors 30 PCs) : Home

GitHub - LightDXY/FT-CLIP: CLIP Itself is a Strong Fine-tuner: Achieving 85.7% and 88.0% Top-1 Accuracy with ViT-B and ViT-L on ImageNet

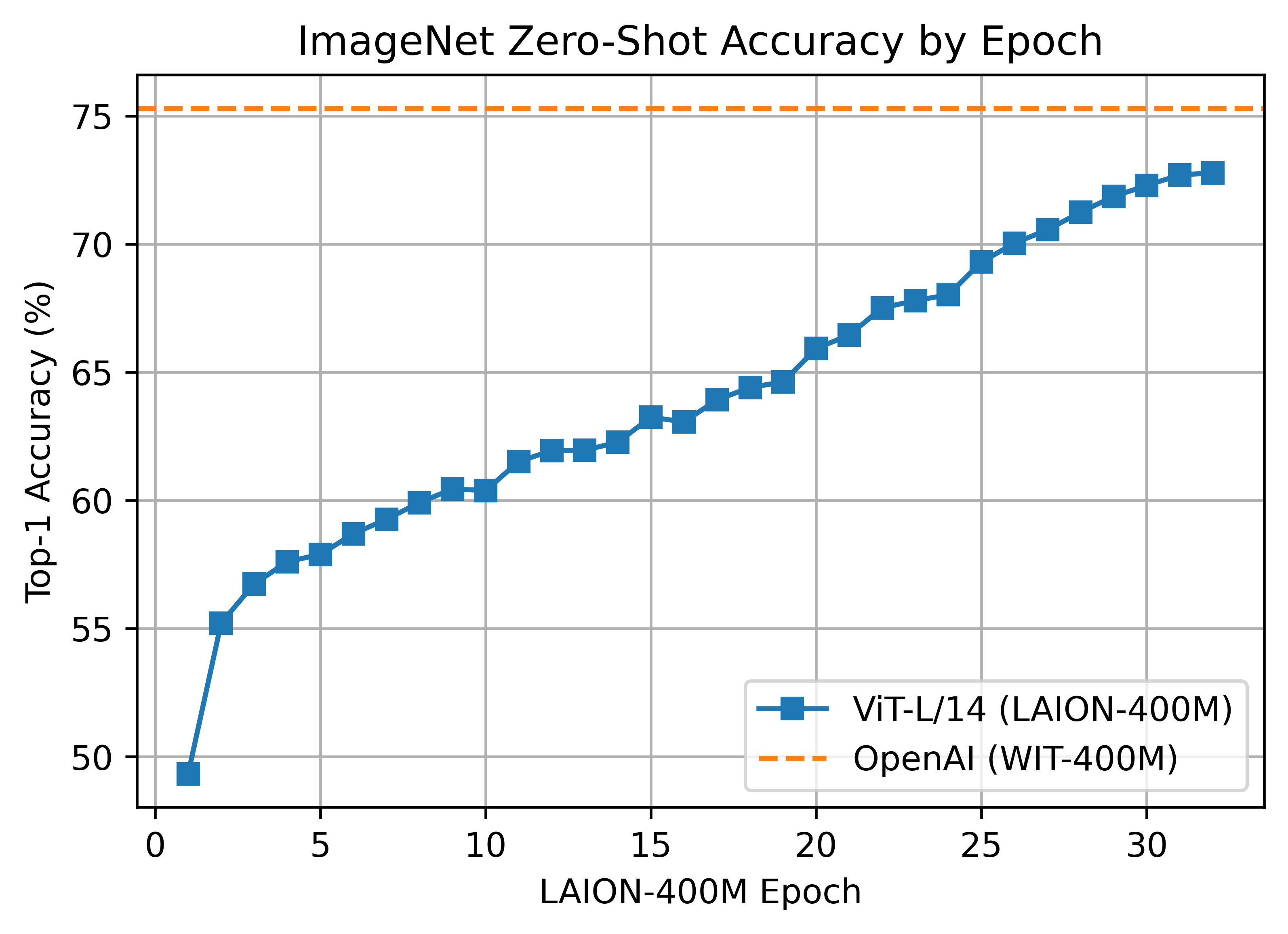

apolinário (multimodal.art) on Twitter: "Yesterday OpenCLIP released the first LAION-2B trained perceptor! a ViT-B/32 CLIP that suprasses OpenAI's ViT-B/32 quite significantly: https://t.co/X4vgW4mVCY https://t.co/RLMl4xvTlj" / Twitter

Principal components from PCA were computed on Clip-ViT-B-32 embeddings... | Download Scientific Diagram

CLIP Itself is a Strong Fine-tuner: Achieving 85.7% and 88.0% Top-1 Accuracy with ViT-B and ViT-L on ImageNet – arXiv Vanity

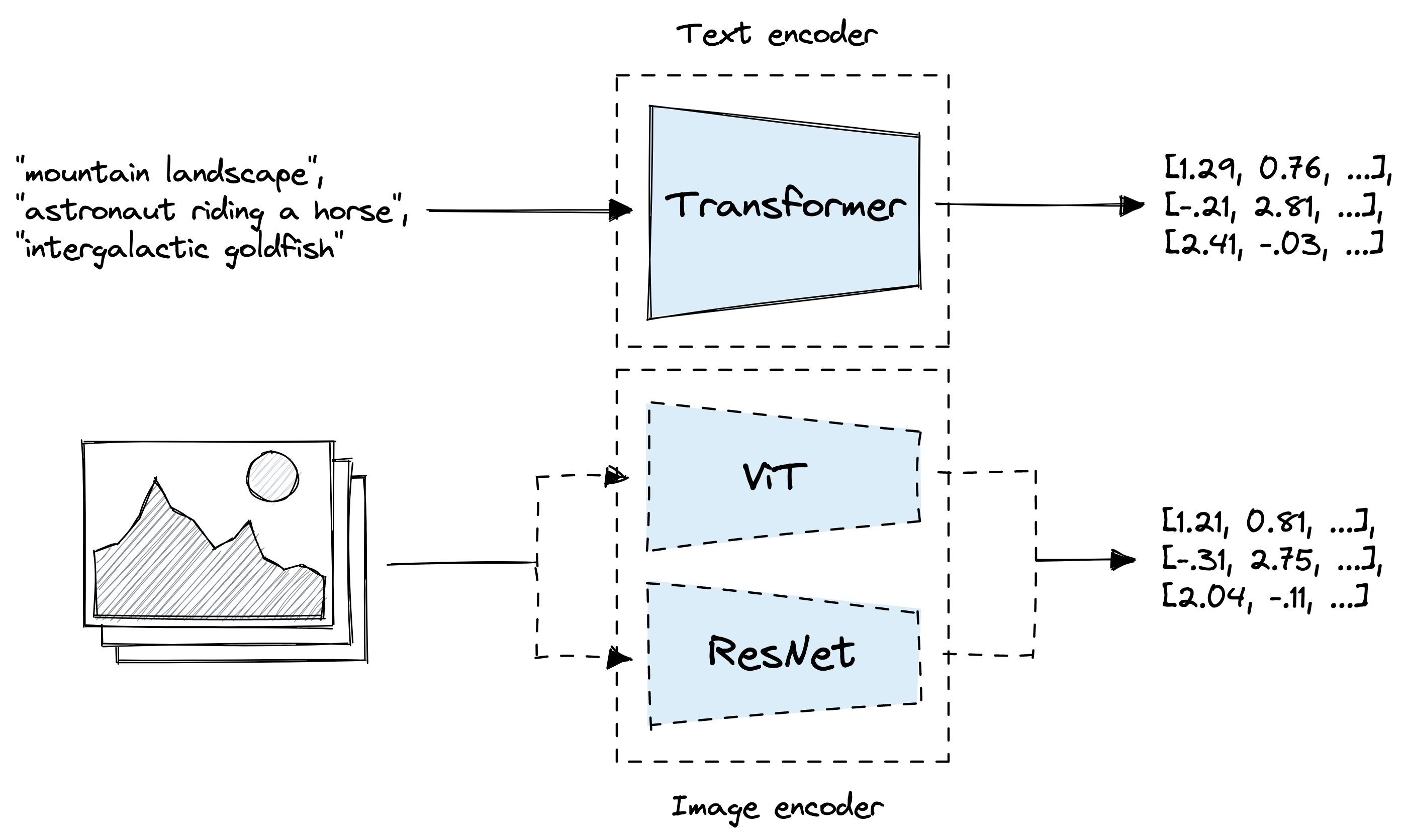

Review — CLIP: Learning Transferable Visual Models From Natural Language Supervision | by Sik-Ho Tsang | Medium

![PDF] Enabling Multimodal Generation on CLIP via Vision-Language Knowledge Distillation | Semantic Scholar PDF] Enabling Multimodal Generation on CLIP via Vision-Language Knowledge Distillation | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/7f71875f8214dffa4f3276da123c4990a6d437cc/8-Table2-1.png)

PDF] Enabling Multimodal Generation on CLIP via Vision-Language Knowledge Distillation | Semantic Scholar